OWASP Top 10 (2025 List) for Python Devs

Episode Deep Dive

Guest introduction and background

Tanya Janca (aka SheHacksPurple) is returning to Talk Python To Me, and her resume keeps getting deeper. She's a former software developer who, as she puts it, "went to the dark side" into application security, and has since written two books on secure coding, the most recent being Alice and Bob Learn Secure Coding. She teaches secure coding and secure use of AI to large enterprises, speaks regularly at conferences (RSA, OWASP Global AppSec, and many more), and is a Lifetime Distinguished Member of OWASP after more than a decade of volunteering. She's a member of the OWASP Top 10 team and led the public rollout of the 2025 edition. Outside of teaching, Tanya just launched a new podcast called DevSec Station with five-to-ten minute secure coding lessons, and she's actively lobbying the Canadian Parliament to pass a secure coding law (petition E-7115) that she hopes will create a domino effect for other countries. You'll also find her writing at shehackspurple.ca where she's been digging into behavioral economics and how to help developers "fall into the pit of success" on security.

- shehackspurple.ca

- securemyvibe.ca (her free secure coding prompt library)

- DevSec Station podcast (search wherever you get podcasts)

- Alice and Bob Learn Secure Coding

What to Know If You're New to Python

A short primer so newer Pythonistas can follow every piece of this conversation without getting lost:

- Web frameworks: The episode references Django, Flask, FastAPI, and Pyramid. These are the most common Python web frameworks. Understanding that web frameworks receive HTTP requests and return HTML or JSON will make the attack scenarios click.

- ORMs and raw SQL: Tanya and Michael talk a lot about parameterized queries. In Python, ORMs like SQLAlchemy and Django ORM automatically parameterize; raw SQL with string concatenation does not. This distinction is what makes SQL injection possible.

- Package managers and lock files: Tools like

pip,uv, andpip-compileinstall third party code from PyPI. Lock files pin exact versions so you get the same code every build, which is central to the supply-chain discussion. - Serialization formats: The episode mentions Python's

pickleand YAML loaders. These are dangerous defaults because they can execute arbitrary code when deserializing untrusted data.

Key points and takeaways

The 2025 OWASP Top 10 is here, and it's freshly reorganized

The OWASP Top 10 is an awareness document listing the most critical web application security risks, drawn from millions of real-world data points plus community surveys. The 2025 edition dropped on December 31, 2025 (yes, right at the wire so they could keep "2025" on the branding), and Tanya says community feedback was the smoothest it has ever been. Each item is a category bucket, not a single bug, and the categories are ordered worst-first: the top of the list is what causes the most damage and is easiest to find and exploit. A key editorial choice this round was to reject vague categories like "poor code quality" because the only mitigation advice would be "suck less," which isn't actionable.

- owasp.org/www-project-top-ten

- github.com/OWASP/Top10 (historical versions from 2003 through 2025)

#1 Broken Access Control is still everywhere

Broken access control leads the list because it is rampant, easy to exploit, and very hard to get right. Every page, every record, every API call should confirm not just that a user is logged in but that they still hold the role required for that specific resource. A common Python-flavored trap is a Django view protected by

@login_required(authentication) with no corresponding authorization check that the user is actually an admin. Another is path traversal: letting a user supply../../etc/passwd-style paths in file operations. Tanya's friend Katie Paxton-Fear, a bug bounty researcher, says she has literally never failed to find broken access control in an API.#2 Security Misconfiguration: small defaults, massive blast radius

Misconfigurations are scanner-bait and attackers run scanners against every public IP constantly. Classic Python examples:

DEBUG = Truein Django production, missing HSTS, missing content security policies, and allowing the app to be framed. The conversation dives into a recent, very concrete example: the Claude Code source code was leaked because a JavaScript source map file wasn't added to.gitignoreand wasn't suppressed at build time, essentially shipping "debug mode in production." Another beautifully sneaky one: on Ubuntu/Debian servers, Docker port mappings bypass UFW (uncomplicated firewall) entirely, so a5432:5432mapping indocker-compose.ymlcan expose your Postgres to the public internet even if your firewall rules say "deny." The fix is binding to127.0.0.1explicitly or keeping those services on an internal Docker network.#3 Software Supply Chain Failures (expanded and more urgent than ever)

Previously this category was just "vulnerable and outdated components." It has been expanded to cover every single thing you use to create and maintain software: your browser plugins, your CI pipelines, your build settings, your developer workstation, the integrity of packages in transit, and especially the developer themselves. Tanya points out that breaches like LastPass started by compromising a single developer (in that case via an outdated Plex server on a home network) and cascaded into the whole organization. For Python devs specifically: pin dependencies with

uv lockorpip-compile, runpip-auditin CI, and critically, do not auto-update to latest. The recent LiteLLM/LitellM-style incident where a compromised dependency lived in the wild for only about 30 minutes still reached roughly 50,000 machines via automatic updates.uv's ability to "only use versions older than N days" is now a safer default.#4 Cryptographic Failures: hash, salt, and maybe pepper

This is about not encrypting when you should, using outdated algorithms, or dropping out of TLS anywhere along the path. For passwords specifically, you should hash (not encrypt), salt (per-user, not secret), and optionally pepper (per-system, secret). Use a memory-hard algorithm like Argon2 rather than MD5 or SHA-1. Tanya's advice for AI-assisted code: don't just ask for "secure" code, ask the AI to list its security assumptions so you can see where it expects you to harden things later.

#5 Injection: not just little Bobby Tables

SQL injection remains the marquee example, but the category is broader: anywhere data can be interpreted as code, you have the risk. Python's ORMs and parameterized queries defeat most SQL injection if you use them correctly. But MongoDB has its own flavor: if your login endpoint accepts a JSON body and you splat it straight into a

find_one()call as a dict, an attacker can send{"username": "admin", "password": {"$gt": ""}}and authenticate as anyone. Pickle and unsafe YAML deserialization are other Python-specific gotchas. The defense stack: parameterize, validate with an allow-list (not a block-list), and escape (don't silently strip) special characters like the apostrophe in "O'Malley."- owasp.org - Injection

- cheatsheetseries.owasp.org - Injection Prevention

- sqlalchemy.org (parameterized queries by default)

#6 Insecure Design: the plan itself is broken

This one was added in 2021 and is the only category focused on the design rather than implementation. The code may look great, but something important is simply absent: no rate limiting on login, client-side-only validation, no threat model, no security requirements, no API gateway in front of an internet-facing API. A design review and a threat model before a line of code gets written are the primary defenses. Tanya's preference: security requirements should be treated as non-negotiable up front, not appended after the fact.

#7 Authentication Failures: stop writing your own

The single biggest lesson: don't roll your own authentication. Buy (or integrate with) a tested product like Auth0, Okta, Azure AD, or Keycloak. Beyond that, protect against credential stuffing and brute force, enforce non-terrible passwords, and ship MFA. MFA doesn't have to be painful; a "trust this device/browser/network" fingerprint can make the second factor only kick in on unusual events or dangerous actions like account deletion. Passkeys are making this meaningfully better.

#8 Software or Data Integrity Failures

This is about making sure what you think you have is actually what you have, whether that's a downloaded library, a CDN-hosted script, or a data payload in transit. A great practical fix: sub-resource integrity (SRI) hashes on every

<script>or<link>you pull from a CDN. Tanya and Michael call out that the Tailwind CDN snippet on jsDelivr's own page doesn't include SRI by default, which is exactly the kind of "the docs nudge you away from the pit of success" problem that keeps this risk alive. For truly paranoid situations (medical devices, finance), integrity failures tend to be silent, which makes them far scarier than outages.#9 Security Logging and Alerting Failures

Devs log for debugging, but security logging is a different discipline: log every security-relevant control, both when it succeeds and when it fails, with timestamps and user IDs. If someone tries to log in 100 times per second, you want all 99 failures in the log, not just the single success that got them in. Tanya shares a client story where Visa called to say 27 customers had been breached and there were zero application logs to investigate, so they ended up rewriting the app from scratch to add proper logging. Without logs, there's no chain of custody, no root cause, and no way to prevent recurrence.

#10 Mishandling of Exceptional Conditions (brand new)

This one is fresh for 2025. It's the

try: ... except: passpattern, or printing a full stack trace to the user, or failing to use a database transaction so partial writes corrupt your data. It tied with "lack of application resilience" for the #10 spot, and Tanya successfully argued that solving mishandled exceptions almost always solves resilience, but not vice versa, which got it on the list. Think about recovery paths, transaction boundaries, and never leaking exception internals to an end user.The "next step" bonus items: vibe coding, memory safety, and application resilience

Below the official Top 10, the team included three honorable mentions they couldn't let go. Vibe coding (letting AI write your app end-to-end without human review, sometimes in "dark factories" with zero humans in the loop) is producing enormous volumes of code that was never reviewed by a security team. Memory safety remains critical even though most Python devs feel insulated from it (your C extensions and dependencies still count). Lack of application resilience tied with #10 and is called out explicitly.

AI can help you find these, if you ask the right way

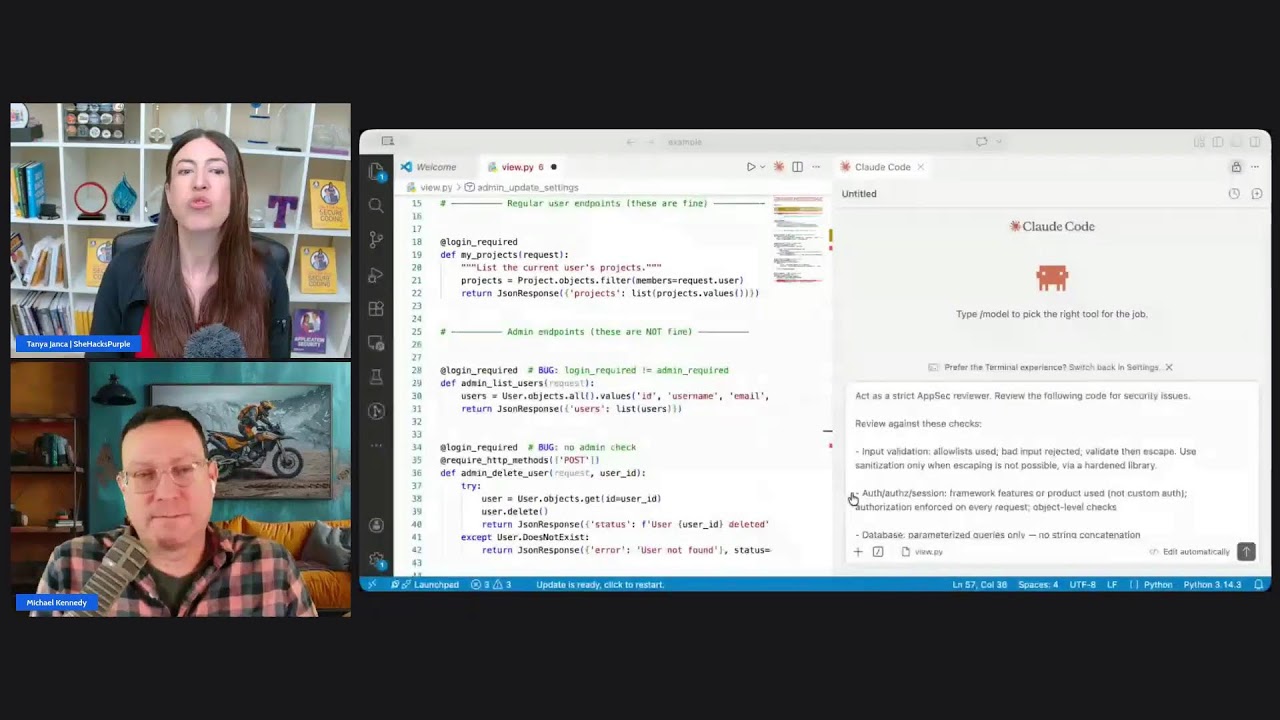

Tanya's central thesis for the AI-era portion of the episode: models were trained on demo code from GitHub, which is overwhelmingly insecure. So the default output is insecure, and saying "make it secure" isn't enough. At RSA she gave a talk called Insecure Vibes where she asked Claude to build a login function for an insulin pump; it did, and then when asked to review its own output, it found critical vulnerabilities in the code it had just produced. The fix is structured prompting: tell the AI what "secure" means for your context, ask it to enumerate its security assumptions, and review its output with an explicit security review prompt. In the live demo on the show, Michael fed 62 lines of code to Tanya's review prompt and the tool flagged more vulnerabilities than there were lines of code.

The three-tier prompt library: bake security in, don't bolt it on

Tanya's free prompt library on securemyvibe.ca is structured in three levels. Level 1 is a persistent memory/skill prompt that runs every single time code is generated; it distills most of the first two-thirds of Alice and Bob Learn Secure Coding into rules the AI must follow, and it forces the AI to surface its security assumptions. Level 2 is scenario-specific: "I'm building an API," "I'm building a serverless function," and sets security requirements before any code is generated. Level 3 is feature-specific deep dives like "I'm implementing a user login, hash the passwords securely using X." The library is free with a newsletter signup.

OWASP is more than the Top 10: chapters, cheat sheets, and community

The Top 10 is the famous artifact, but OWASP has over 100 active open source projects, 300+ local chapters worldwide, and a Slack with thousands of members. Most practically, the OWASP Cheat Sheet Series has over 100 cheat sheets covering exactly the topics devs actually Google: authentication, authorization, CSRF, CORS, logging, password storage, input validation, and more. Next time you're about to search the internet for a security how-to, add "OWASP cheat sheet" to the query and skip straight to authoritative advice.

Canada's secure coding law (Petition E-7115)

Tanya is trying to push a secure coding law through the Canadian Parliament that would require secure coding standards across all governmental organizations, and she even wrote the standard herself as a draft. She has enough signatures and a sponsoring MP, so it's going to vote. Canadians can sign and, more importantly, call or email their MP to urge a yes vote. Tanya's logic: if Canada leads, it's momentum for every other country, and Canada has led on privacy law and quantum law before.

Interesting quotes and stories

"I was a software developer that switched into application security. I went to the dark side, Michael." -- Tanya Janca

"Help developers and security folks fall into the pit of success. You've got to climb out of the thing you're supposed to do and actively do it wrong." -- Michael Kennedy, paraphrasing Scott Guthrie

"I have never, not once, found broken access control in an API. Like, never once." -- Katie Paxton-Fear, as quoted by Tanya

"You're actually having a penetration test done all the time if you're on the internet. You just aren't receiving the report." -- Tanya Janca

"It's more efficient to not worry about the security. It'll save you some tokens." -- Tanya, on why AI-generated code skips security by default

"So I asked for it to be secure. So you can't just say, I want to be secure. You have to say, and this is what secure means." -- Tanya Janca

"Visa called us and 27 of our customers got popped, and we need you to go investigate. And turns out they didn't have any logs. What am I supposed to investigate? Walk around the building with a magnifying glass and a hat on?" -- Tanya Janca

"The whole Top 10 is only sand in the gears until something happens and then it's your fault for not doing it." -- Michael Kennedy

"I gave it 62 lines of code. And you are going to have more vulnerabilities than you have lines of code." -- Tanya Janca, during the live secure-code review demo

Key definitions and terms

- OWASP: The Open Worldwide Application Security Project. An international nonprofit and community focused on software security, known for the Top 10 list, 100+ open source projects, and the Cheat Sheet Series.

- Top 10: OWASP's awareness document listing the ten most critical categories of web application security risk, updated roughly every four years.

- Parameterized query: A SQL (or NoSQL) query where user-supplied values are sent separately from the command text, so they cannot be interpreted as code. The primary defense against injection.

- Allow-list vs. block-list: Allow-list validation accepts only known-good input (e.g., letters and digits); block-list tries to enumerate bad characters. Block-lists always lose; allow-lists usually win.

- Salt: A unique, non-secret random value appended to a password before hashing, different per user. Defeats rainbow tables.

- Pepper: A secret value appended to passwords before hashing, the same for every user in a system. Stored outside the database.

- Argon2: A modern, memory-hard password hashing algorithm, winner of the Password Hashing Competition. Preferred over MD5, SHA-1, or even bcrypt for new systems.

- Sub-resource Integrity (SRI): A browser feature that lets an HTML page specify a cryptographic hash for a script or stylesheet loaded from a CDN. The browser refuses to execute it if the hash doesn't match.

- Reachability (in SCA tools): Analysis that determines whether your code actually calls the vulnerable function path in a dependency, rather than just flagging every known CVE in every installed package.

- Supply chain: Everything you use to build and maintain software, including packages, CI, browser plugins, the developer workstation, and even the developer.

- Dark factory: A software-development organization that has replaced all human developers with end-to-end AI agents, with no human in the loop.

- Vibe coding: Generating and shipping software primarily through natural-language prompts to AI, typically without traditional code review.

- Credential stuffing: Using username/password pairs leaked from one breach to try to log in to unrelated sites, exploiting password reuse.

- Memory-hard hashing: A hashing algorithm designed so that computing it requires significant RAM, making GPU and ASIC attacks uneconomic.

Learning resources

If this conversation lit a fuse under your security curiosity, here are some Talk Python Training courses that map directly to the topics in the episode. Use them to go deeper on the technical foundations that make the Top 10 easier to apply in your own projects.

- Rock Solid Python with Python Typing: Type hints catch entire categories of bugs that would otherwise become runtime errors (including some security-relevant ones) and power frameworks like Pydantic and FastAPI.

- Secure APIs with FastAPI and the Microsoft Identity Platform: A direct hit on authentication failures and insecure design; builds JWT/OAuth2/OIDC-based auth on FastAPI with Azure AD.

- Modern APIs with FastAPI and Python: Ideal grounding for the injection, access control, and design conversations.

- Django: Getting Started: Understand where

@login_required, permissions, andDEBUGsettings live in the Django stack. - MongoDB with Async Python: Useful context for the MongoDB injection examples and safe query patterns.

- Managing Python Dependencies: Directly applies to supply chain failures: pinning, lock files, and choosing quality libraries.

- Getting started with pytest: Testing is the other half of reliable code; pytest is the on-ramp.

- Python for Absolute Beginners: If you're brand new to Python, start here so the code examples in this episode make sense.

Overall takeaway

The 2025 OWASP Top 10 is less about ten shiny new bugs and more about a shift in how we need to think about software: the supply chain now stretches from your IDE to your CI to your browser to the developer themselves, AI is cranking out code faster than any security team can review it, and "failing silently" is quickly becoming the most dangerous failure mode in the industry. Tanya's guidance throughout this episode is refreshingly practical: don't roll your own auth, pin but update your dependencies, log every security control, review the design before the code, and when you use AI, tell it what "secure" actually means. Python developers are in a good spot here: the frameworks we use (Django, FastAPI, SQLAlchemy, Pydantic) already do a lot of the heavy lifting if we let them. The real move is to pair that framework-level protection with deliberate prompts, explicit checklists, and a default mindset of making the secure path the easy path, so future-you and future-users fall into the pit of success. Go read the Top 10, bookmark the Cheat Sheet Series, grab Tanya's free prompt library at securemyvibe.ca, and next time you ship something, ship it with logs, transactions, and a tested auth provider. Your future self will thank you.

Links from the show

SheHacksPurple Newsletter: newsletter.shehackspurple.ca

owasp.org: owasp.org

owasp.org/Top10/2025: owasp.org

from here: github.com

Kinto: github.com

A01:2025 - Broken Access Control: owasp.org

A02:2025 - SecuA02 Security Misconfiguration: owasp.org

ASP.NET: ASP.NET

A03:2025 - Software Supply Chain Failures: owasp.org

A04:2025 - Cryptographic Failures: owasp.org

A05:2025 - Injection: owasp.org

A06:2025 - Insecure Design: owasp.org

A07:2025 - Authentication Failures: owasp.org

A08:2025 - Software or Data Integrity Failures: owasp.org

A09:2025 - Security Logging and Alerting Failures: owasp.org

A10 Mishandling of Exceptional Conditions: owasp.org

https://github.com/KeygraphHQ/shannon: github.com

anthropic.com/news/mozilla-firefox-security: www.anthropic.com

generalpurpose.com/the-distillation/claude-mythos-what-it-means-for-your-business: www.generalpurpose.com

Python Example Concepts: blobs.talkpython.fm

Watch this episode on YouTube: youtube.com

Episode #545 deep-dive: talkpython.fm/545

Episode transcripts: talkpython.fm

Theme Song: Developer Rap

🥁 Served in a Flask 🎸: talkpython.fm/flasksong

---== Don't be a stranger ==---

YouTube: youtube.com/@talkpython

Bluesky: @talkpython.fm

Mastodon: @talkpython@fosstodon.org

X.com: @talkpython

Michael on Bluesky: @mkennedy.codes

Michael on Mastodon: @mkennedy@fosstodon.org

Michael on X.com: @mkennedy

Episode Transcript

Collapse transcript

00:00 The OWASP Top 10 just got a fresh update, and there are some big changes.

00:03 Supply chain attacks, exceptional condition handling, and more.

00:07 Tanya Janca is back on Talk Python to walk us through every single one of them.

00:12 And we're not just talking theory here.

00:14 We're going to turn Claude Code loose on a particularly crappy web project and see what it finds.

00:20 Let's do this.

00:21 It's Talk Python To Me, episode 545, recorded April 8th, 2026.

00:28 Talk Python To Me.

00:30 Yeah, we ready to roll.

00:31 Upgrading the code.

00:32 No fear of getting old.

00:34 Async in the air.

00:35 New frameworks in sight.

00:36 Geeky rap on deck.

00:38 Quarth Crew, it's time to unite.

00:39 We started in Pyramid.

00:41 Cruising old school lanes.

00:43 Had that stable base, yeah, sir.

00:44 Welcome to Talk Python To Me, the number one Python podcast for developers and data scientists.

00:49 This is your host, Michael Kennedy.

00:51 I'm a PSF fellow who's been coding for over 25 years.

00:55 Let's connect on social media.

00:56 You'll find me and Talk Python on Mastodon, Bluesky, and X.

01:00 The social links are all in your show notes.

01:02 You can find over 10 years of past episodes at talkpython.fm.

01:06 And if you want to be part of the show, you can join our recording live streams.

01:10 That's right.

01:10 We live stream the raw uncut version of each episode on YouTube.

01:14 Just visit talkpython.fm/youtube to see the schedule of upcoming events.

01:19 Be sure to subscribe there and press the bell so you'll get notified anytime we're recording.

01:23 This episode is brought to you by Temporal, durable workflows for Python.

01:27 Write your workflows as normal Python code and Temporal ensures they run reliably, even across crashes and restarts.

01:34 Get started at talkpython.fm/Temporal.

01:38 Hello, Tanya Janca.

01:39 Welcome back to Talk Python To Me.

01:40 Awesome to have you here.

01:41 Oh my gosh, Michael.

01:42 It's so nice to see you.

01:43 Yeah, it's really great to see you as well.

01:45 I remember last time I was nervous you were on the show because you're going to make me feel concerned about all my software running on the internet that now all has all these issues I just realized.

01:55 We're back for the 2025 edition.

01:57 And I know the year is 2026.

01:59 Please don't email me.

02:00 I mean, email me, but not for that reason.

02:02 But this is the 2025 OWASP top 10, which is pretty new, right?

02:07 Yeah, so we released it December 31st, 2025 so that it could stay 2025 on it.

02:13 Do you know how much branding has to change if we don't get it out this year?

02:17 Let's just go.

02:18 That's incredible.

02:19 I didn't realize it was that close to the wire.

02:20 We had released the release candidate.

02:23 So every time it's released, we release a release candidate to say, this is what we're thinking.

02:29 And then we ask the community for feedback.

02:32 And I don't know if you remember in previous versions before I joined, there was some drama where sometimes the community is like, absolutely not.

02:39 You are incorrect.

02:40 Or there's vendor influence or whatever.

02:42 And then they've had to rework it.

02:43 But this time it was the smoothest it's literally ever been since the first time where all the links were or all the GitHub issues were great.

02:53 Like, hey, you know, here's a great example of that attack.

02:57 Do you want to use it?

02:59 And we're like, yes.

02:59 Or, you know, the grammar is wrong here or the, you know, the links are wrong.

03:03 We had a couple of, well, this one should be number one because that's what my product solves.

03:08 We're like, well, we'll hear that feedback.

03:11 Exactly.

03:11 You failed to mention our product.

03:13 I'm like, oh, I do see how that happened.

03:15 Yeah.

03:16 But other than like the feedback was overall just overwhelmingly, yes, we agree, which was very validating.

03:22 Yeah.

03:23 I'm sure there was a lot of, boy, if I could get the OWAS top 10 to reference my solution.

03:28 That's some good marketing right there.

03:30 Incredible.

03:30 So before we dive into that with a bit of a Python focus, let's just hear a little bit about you, who you are.

03:39 You've started a podcast since you've been on the show.

03:42 Tell us about you.

03:43 So I'm Tanya and I was a software developer that switched into application security.

03:48 I went to the dark side, Michael.

03:50 I started speaking at conferences so I could get in free and writing and then ended up writing two books.

03:55 And now I teach secure coding and like how to use AI securely and all of those things to large companies.

04:02 And then speak at conferences.

04:04 Serve as like a habit.

04:05 I can't stop.

04:06 You don't get paid to do that usually.

04:09 And so recently I started a podcast called DevSec Station.

04:12 And it's five to 10 minute lessons on secure coding and it's free.

04:19 I used to have a podcast called We Hack Purple Podcast and I, like my company got bought and absorbed, et cetera.

04:26 And eventually the podcast was retired.

04:28 I've missed having a podcast, Michael.

04:30 I'm sure as a podcast host, you can relate.

04:31 It's really nice to be able to create a piece of art and release it.

04:35 It's a very interesting medium and you get to just reach out to people or explore ideas that are just interesting to you.

04:43 And long as there's a through thread, you can kind of do whatever you want to.

04:47 It's great.

04:48 Yeah, I love it.

04:48 I wanted to teach some lessons and I wanted them to be just really short.

04:53 And the first season I'm exploring the idea that the supply chain is changing.

04:58 The supply chain security used to be just dependencies that people worried about.

05:02 But now I'm like, what if that tech surface is actually very different than we realize?

05:08 And so I'm talking about how developers can protect themselves, protect the organizations, protect their build pipelines, et cetera.

05:14 And so, yeah, I'm excited to see what people think of it.

05:17 Yeah, I encourage people to subscribe.

05:19 That's really cool.

05:19 Five to 10 minutes.

05:21 Is it daily or weekly?

05:22 So I released one two weeks ago and I was kind of thinking of releasing one tomorrow, but I need to just get the edits.

05:27 It's, I hired some students and they're learning video editing and it's been very exciting.

05:33 Yeah, that's the back end of all this type of work that people don't realize is it's an hour or 10 minutes or whatever, but then there's the whole production distribution, et cetera.

05:43 I'm just a control freak.

05:44 That's the problem, Michael.

05:46 I just want to do it myself.

05:48 Yes, exactly.

05:49 There's a bit of a wind noise thing.

05:51 Can we do that again?

05:52 No, it is tough.

05:53 It is really, really tough to kind of find that balance.

05:56 But yeah, people check that out.

05:57 That's awesome.

05:58 Yeah.

05:59 And is SheHacksPurple still your domain?

06:02 Yeah.

06:02 So if people go to SheHacksPurple.ca, you will find my website and my services and my blog.

06:08 So lately I'm blogging a lot about how we can combine behavioral economic interventions, which is like the science of why people make decisions to the software development ecosystem

06:19 so that we basically set up secure defaults and other things that just nudge developers to do the secure thing and make the secure thing always the easiest path.

06:30 And so not how do we manipulate them and pressure them and make them feel bad, but more how can we remove cognitive load that's not necessary?

06:39 How can we make it more obvious what we hope that they'll do?

06:42 How can we make it so like it requires effort to do the bad thing?

06:46 There's a phrase that I got, I think from Scott Guthrie was the one who spoke about it at Microsoft, but it doesn't really matter.

06:53 The help people, help developers and security folks fall into the pit of success.

06:58 Like you've got to climb out of the thing you're supposed to do and actively do it wrong.

07:02 You know what I mean?

07:03 Exactly.

07:04 Exactly.

07:04 So I'm writing a series on that based on a talk I did.

07:08 So sometimes I'll do a talk and then I'm really excited about it.

07:10 And I'm like, well, now I can nerd out as much as I want on my blog.

07:13 I want to talk about what is the OWASP top 10?

07:17 What is OWASP?

07:17 What is the OWASP top 10 and all of that?

07:20 But before we kind of get into that, I do want to set the stage just a little bit, because when people think about Python security or you pick your language, there's like every language and framework has a little security gotchas.

07:34 Like in Python, there's a, I think it's a YAML parser, but it's too, it can run arbitrary code.

07:41 So you got to do like the safe YAML parsing.

07:43 And then there's, there's pickles, which is a serialization format that can run arbitrary code.

07:47 So people say, these are the things to look out for.

07:50 I actually think those are fairly useless.

07:52 I think the real problem is things that developers do to write code and they either omit or add actions or steps that they should or shouldn't have done depending on the situation.

08:04 And really that's what OWASP focuses on, right?

08:07 Technically it is in a nonprofit organization, but in what I feel it is, is an international community of thousands and thousands and thousands of people who want there to be more secure software.

08:20 And so we have chapters where people meet each month.

08:22 There's over 300 worldwide, Michael.

08:25 It's amazing.

08:27 Wow.

08:27 Yeah.

08:27 Almost every big city in all of Canada has one.

08:30 And like, we can't agree on a lot of things in Canada, but apparently we love OWASP.

08:34 And like in India, oh my gosh, they have so many.

08:37 And in the United States, like people love, oh, I love OWASP.

08:41 And then we have over 100 active open source projects.

08:45 So we have free books, free documents, free tools, free software, like everything you can think of.

08:51 We're like, oh, I'll build one for you.

08:53 And then, you know, there's a Slack channel with thousands of us on there being nerds together.

08:56 And then twice a year, we have one in Europe and one in North America where we have a conference and we gather and there's talks and teachings and all the things, right?

09:08 And I've been a part of OWASP since 2015 was the first thing I attended.

09:16 And then 2016, I was a chapter leader because I don't know how to do small things.

09:19 I only know how to do really big things because I was like, oh, I'm in.

09:23 And I've just been, so I'm now a lifetime distinguished member because I've volunteered for over 10 years and I'm their biggest fan.

09:32 I'm totally president of the non-existent fan club.

09:35 And the thing, so the weird thing, Michael, so OWASP does so many amazing things.

09:40 There's so many amazing people.

09:41 But the thing that we're absolutely most famous for is called the OWASP top 10.

09:46 And I volunteered in Norway at the OWASP booth at a conference because I gave a talk and then I had nothing else to do.

09:55 And I don't mean to sound rude, but unless it's a security talk, I'm not going.

09:59 And it was a developer talk.

10:00 And so I went to the other two security talks and then I was like, what am I going to do?

10:03 So I volunteered at the OWASP booth.

10:05 And every person that walked by went top 10.

10:08 That's awesome.

10:09 And any of them that knew us, they only knew the top 10.

10:13 They didn't know we had chapters.

10:14 They didn't know we had any other things to help them.

10:16 But literally person after person, top 10.

10:20 That's pretty funny.

10:21 Like a few weeks later, the top 10 team invited me to join.

10:24 And I was like, me?

10:26 And I was like, okay.

10:28 I knew about the whole project because of the top 10 as well, honestly.

10:32 Well, I mean, then we're doing a great job.

10:34 And so we finally wrote a new one.

10:36 And the team's like, okay, Tanya, you like talking the most.

10:39 So you go tell them.

10:41 You go tell them it's ready.

10:42 Actually, if you go to the OWASP top 10, there's a GitHub link at the top.

10:47 And you can see that there's, if you go there, you can actually see historically the 2003, 4, 7, 10, 2017, 2021, and the 2025.

10:55 And you go and there's all the markdown files and presentations and et cetera.

10:59 So you can kind of get the historical evolution as well.

11:02 People file issues.

11:04 And then I have to, me or, you know, we're twisting or that's what we do.

11:09 We respond.

11:10 Sometimes we respond and we're like, no.

11:13 This is the third time you've asked.

11:14 No.

11:15 We talked about it twice.

11:16 Yeah.

11:17 We try really hard to always be open because the community, sometimes we don't have the data that supports the thing.

11:23 But let me tell everyone what it is.

11:24 So if you've been hiding under a rock, the OWASP top 10 is an awareness document of the top 10 things, according to the data we gathered and multiple community surveys of risks to web applications.

11:37 Most of them relate to all software, but technically this one is about web applications.

11:43 And you might not realize, but underneath the next steps, there's three more secret items because we couldn't decide, Michael.

11:52 And Vibe coding needed to be on there.

11:54 And then there was sort of a tie for the number 10.

11:58 So we want to include the one that was the tie.

12:00 And then we felt memory safety is still so unbelievably critical.

12:05 We had to comment on that as well.

12:07 So we had to talk about those as well.

12:10 So that was good.

12:12 So it's the top 10, but these are three other things for your consideration that are very important.

12:16 I did not realize those.

12:17 How interesting.

12:18 I do think in whenever you release your next version in two, three, four years, whatever, there's going to be a strong AI bent and we're going to get into the AI angle of security in this conversation, which is going to be really fun.

12:32 But yeah, I think we're just beginning this.

12:35 Like you called it out with Vibe coding, but there's more to that even.

12:38 Making the top 10 is complicated because we have to do it based on data.

12:43 And the data I would like would be the postmortem from security incidents and would be, you know, the AppSec team telling us the things that are happening, not what we actually

12:54 get, which is a bunch of SaaS vendors and DAS vendors telling us what their automated tools are capable of finding.

13:00 And then a bunch of boutique pen tester companies who are so generous to give us their reports and to try to normalize that for us.

13:09 And like we end up with millions and millions and millions of records and that's great.

13:13 But if a SaaS tool is really good at finding X, that doesn't necessarily mean that X is the biggest problem in our industry.

13:22 And so when we put supply chain security on there and expanded it from just being libraries, like, oh, you're using outdated or vulnerable components.

13:31 Like, yeah, that's bad.

13:33 But there's also malicious components.

13:35 There's also you didn't lock down your CI and then the co-op term, like the co-op or you call them interns, put too many zeros on the Kubernetes deployment.

13:45 And then you've got $30,000 bill you weren't expecting or like I've seen it, right?

13:50 I feel like it's hard to get data that tells the full picture.

13:54 And we're not allowed to just say, well, this is what the team thinks, right?

13:58 So that's where the surveys come in.

13:59 And we're like, this is what we see.

14:02 This is what we think.

14:02 Do you agree?

14:03 And like across the board, everyone was like, supply chain must be on that list.

14:07 You must expand it.

14:08 We agree.

14:09 I think it's the biggest issue of the year, at least of the last six months.

14:12 If we look at reports like the Verizon breach report and the CrowdStrike and the like the big reports of many, many breaches, if we look at the big ones, the nation state ones,

14:24 their supply chain, or in my opinion, if we really look at it, we think about it, they exploited the developer.

14:31 They compromised a developer within an organization.

14:33 It gave them access to multiple parts of the supply chain.

14:37 And then they owned the entire organization.

14:40 So if you get SQL injection in one app, you got into one database and maybe you could read sensitive data.

14:46 Maybe you could delete sensitive data.

14:49 If that database was completely unpatched in a total like terrible mess, then maybe you could take over that server.

14:56 Then if your network's totally not secure and crappy, which is not exactly that common, like I'll look at, you know, network diagrams with clients and I'm like, oh, that line, is that a firewall?

15:06 They're like, it's more aspirational.

15:07 I've heard that many times, right?

15:09 Then maybe they could pivot and get to a couple of places, but that's like, maybe, maybe, maybe, maybe, maybe you get a little bit, but you compromise a senior developer.

15:18 Right.

15:18 And so, yeah, I was really glad when the team agreed that we would do this and then the community supported it.

15:25 So I was like, yes, win.

15:26 Yeah.

15:27 You compromise a developer, then you get.

15:29 Especially a senior.

15:30 Yeah, exactly.

15:31 You get arbitrary code execution on potentially all the stuff that they send out to the world.

15:36 It's, it's really bad.

15:37 You know, an example of that would be the last past breach.

15:40 Yeah.

15:40 And the way that that all started from what I understand is one of the developers had an outdated version of Plex, the like streaming ripped video player on his home network that was open on the internet.

15:53 That got taken over.

15:54 Then they got into the dev machine and then they got everybody's password vaults.

15:57 It's like, excuse me, because you had a movie player on your home automation network.

16:03 That's crazy.

16:04 There's been some recent hacks where the developers download like a plugin or something and it's malicious.

16:10 And then not only is it trying to steal like secrets off their computer, but then it robs their crypto wallets.

16:17 Because why don't you just kick someone when they're down like jerks?

16:21 It's terrible.

16:22 Well, we'll circle back to that.

16:23 But I do think the vibe coding side is going to be a big deal in the future and you'll have a little bit of a challenge there.

16:29 I think obviously professional developers love to bag on vibe coding.

16:34 And I think that that's totally fair.

16:35 But the problem is, I think a lot of that is kind of dark matter.

16:39 Like you'll never see the people because the people do vibe coding.

16:43 They don't know to go and fill out a survey for OWASP.

16:45 They don't even know what a line of code looks like.

16:47 They're just make me happy.

16:49 You know, it's crazy.

16:50 And now some companies are building dark factories, which is a term that was new for me, which is where they replace their entire software development team only with complete AI end to end solutions where there's no human in the loop whatsoever.

17:05 And oh my gosh, Michael, imagine the security posture of what's being released there and the fact that they don't know what the posture is, right?

17:13 But they're getting to market faster than someone else.

17:16 Yes.

17:17 And so consumers don't know that what they're buying has been put together with duct tape and glue.

17:23 So I actually, we didn't talk about this beforehand, but I hope it's okay to mention.

17:27 So I'm trying to push a secure coding law in Canada.

17:30 So Canada is cute and quaint, just like all the stereotypes.

17:34 And a citizen is allowed to create a petition if she could get a member of parliament to support her.

17:39 And after three years of letter writing, one of them did.

17:42 Wow.

17:42 Congratulations.

17:43 Thank you.

17:44 In the house of commons and I have enough signatures, so it's going to go to vote.

17:47 And so right now I'm lobbying all the public to try to get them to call their member of parliament and ask them to vote.

17:54 Yes.

17:54 Because what happens is my member of parliament will be like, Hey, seven one, one, five says this.

18:01 We should create a secure coding law for all governmental organizations, have a standard and then, you know, assure the standard, make, make sure there's compliance.

18:09 And I also like wrote the standard for them and sent it to them.

18:12 Cause that's what I'm like, I'm like, you don't have to use it, but like, here it is in case you want one.

18:16 I've been writing letters for years.

18:17 I'm very annoying.

18:18 Guess what?

18:19 Members of parliament don't know what the word cyber means.

18:21 Like they're very smart.

18:22 I'm not trying to mock them.

18:23 I'm not an expert in what they do either.

18:25 Right.

18:26 And so I need members.

18:28 So Canadians, if you're listening, go like, look up petition E seven one, one five.

18:33 You'll find me sign it and then call or write your member of parliament.

18:36 So if enough of us call, if like 10 or 20, 30 people call the member of parliament or they receive emails, Michael, when the petition comes up, they're like, Oh yeah, my constituents care.

18:45 Therefore I do.

18:46 And they'll raise their hand and I get one chance.

18:49 And I really want a lot of hands going up specifically at least half of 334 people.

18:54 Good luck with that.

18:55 I hope that that goes through.

18:56 That's cool.

18:56 We'll know by June.

18:58 Yeah.

18:58 That's the challenge with legislatures and government in general.

19:01 So many of the people, especially elected officials, they're not elected because they're developers or security specialists or whatever.

19:08 Right.

19:08 But the difference is that you said it's fine because you don't know what they do.

19:13 You're not an expert in law or whatever.

19:14 That's that is true.

19:15 But they have to choose how it's going to work for us through technology, whereas you don't have to choose how law works for them as a tech.

19:23 You know what I mean?

19:23 Like it's they decide.

19:25 So it's I'm not saying that it's their fault or anything, but it is a very tricky thing to balance.

19:30 It is.

19:30 And this is why I, as like a influential person or whatever, are trying to use my influence for good.

19:37 And I'm trying to protect Canada.

19:39 And here's the thing, Michael, is that if Canada creates a law that does this, that is huge momentum for every other country.

19:46 And Canada was one of the first countries to have privacy laws.

19:50 Like we really led the way in that.

19:51 We really have led the way in like laws for quantum as well.

19:56 And like, we're not really used to being that we're just, you know, struggling along and we could lead the way in this.

20:04 Right.

20:04 And then that means other countries can say, well, they have one.

20:07 Like, do we really want to be behind Canada?

20:09 I mean, come on.

20:10 We love Canada.

20:11 Canada is awesome.

20:11 Yeah, we're sweet and we're wonderful.

20:13 And we mean well all the time.

20:16 This portion of Talk Python To Me is sponsored by Temporal.

20:18 Ever since I had Mason Egger on the podcast for episode 515, I've been fascinated with durable workflows in Python.

20:25 That's why I'm thrilled that Temporal has decided to become a podcast sponsor since that episode.

20:30 If you've built background jobs or multi-step workflows, you know how messy things get with retries, timeouts, partial failures, and keeping state consistent.

20:39 I'm sure many of you have written brutal code to keep the workflow moving and to track when you run into problems.

20:45 But it's trickier than that.

20:46 What if you have a long-running workflow and you need to redeploy the app or restart the server while it's running?

20:52 This is where Temporal's open source framework is a game changer.

20:56 You write workflows as normal Python code and Temporal ensures that they execute reliably, even across crashes, restarts, or long-running processes while handling retries, states, and orchestrations for you so you don't have to build and maintain that logic yourself.

21:10 You may be familiar with writing asynchronous code using the async and await keywords in Python.

21:16 Temporal's brilliant programming model leverages the exact same programming model that you are familiar with but uses it for durability, not just concurrency.

21:25 Imagine writing await workflow.sleep.

21:28 Heim Delta, 30 days.

21:30 Yes, seriously.

21:31 Sleep for 30 days.

21:32 Restart the server.

21:33 Deploy new versions of the app.

21:34 That's it.

21:35 Temporal takes care of the rest.

21:36 Temporal is used by teams at Netflix, Snap, and NVIDIA for critical production systems.

21:41 Get started with the open source Python SDK today.

21:44 Learn more at talkpython.fm/Temporal.

21:47 The link is in your podcast player's show notes.

21:50 Thank you to Temporal for supporting the show.

21:52 Let's shift just a little bit to maybe people who don't necessarily mean well, the people who might exploit, you know, broken access control or other types of things.

22:03 And to aid us here, going through the OWASP top 10, I've come up with a little example of, well, this is what some of the concrete examples might look like.

22:13 Maybe I'll put a link to this and I'll reference them.

22:16 And I don't know how often I'll totally use this.

22:18 But let's start with this one.

22:20 And if I understand it correctly, you all don't like suspense.

22:24 It's the worst comes first, then the second worst, and then the third worst.

22:27 Yeah, we go in order of this.

22:29 So this is, it causes lots and lots of damage.

22:33 It's not that hard to find or exploit.

22:35 And it's everywhere.

22:37 It's everywhere.

22:38 When I talk to people that do pen testing, they're like, yeah, I find this basically every time.

22:43 Like one of my friends, Katie Paxton Fear, she does API content and pen testing and bug bounty and stuff.

22:51 And she said, I have never not once found broken access control in an API, like never once.

22:59 And it's really hard to get right, Michael, because every single page, every single record, every single access, we should check that the role is allowed.

23:08 And that they still are that role, right?

23:11 So we continue to make sure the session's accurate and then grant access.

23:15 And unfortunately, we just forget to ask a bot.

23:18 Or we return the entire record set, and we'll just sort it out on the front end.

23:23 And the malicious actor is like, thanks for the data set.

23:26 That's so sweet of you to give that to me.

23:29 And we just screwed up so much, so much.

23:32 I would like to point out that these are not a single vulnerability.

23:35 It's not like this is the number one issue.

23:38 They're like categories, right?

23:39 It's like violations of these could be you didn't use least privilege, or you bypass a control check, or you didn't put access control on the delete part of the API.

23:49 You know, like there's a bunch of things that fall into each one of these, right?

23:52 It's a category.

23:52 They're all a bucket.

23:55 And when we looked at the data, poor code quality was a bucket.

24:00 I'm like, no, because all of the things go into that bucket, right?

24:03 The OWASP top one.

24:04 OWASP top one.

24:05 And then also the mitigation advice is, what if you sucked less, right?

24:10 Like there's no constructive feedback to poor code quality.

24:12 It's not specific enough.

24:14 So we didn't want buckets like that.

24:16 Keep it actionable, right?

24:17 Yeah, exactly.

24:18 If it's not actionable, it's not worth raising awareness about, we felt.

24:21 And broken access control, Michael, it's everywhere.

24:24 And I wish there was like a product that you could buy that could just do this for you.

24:28 So you can buy authentication products that manage session and identity like really, really, really well, right?

24:35 And people buy the crap out of them because they work really well.

24:38 I'd like to be able to buy an access control tool that was as easy to implement as, I'm going to try not to name brands, but you know the products, right?

24:48 People pay a lot of money for Okta, you know, at like Active Directory, you know, Incognito because they work, right?

24:57 And they work well.

24:58 And the less painful they are to implement, the more likely, like they're willing to pay more for that, right?

25:04 And so if we could solve this issue, like I think that could be pretty huge.

25:08 Yeah.

25:09 I got a couple of examples here that were, they're not obvious.

25:12 Some are obvious, kind of like, here's a Django example.

25:15 We might see this later.

25:16 So you might have an admin endpoint and it has at login required, which is a decorator in Django that will do the validation before the function even runs.

25:25 And so you look at it like, oh yeah, this is fine.

25:27 It's using authentication here, but it's not using authorization.

25:30 It's not checking that the person necessarily is an admin.

25:33 It's just that they're logged in, right?

25:35 Like that's a real simple example.

25:37 Yep.

25:38 And I see the problem.

25:39 Another one would be if you say we're going to let people read and write files.

25:43 Maybe it's like a WordPress type thing or something.

25:46 But then if they can put dot, dot in their path and break out, let me read the file dot, dot, slash, dot, dot, slash, et cetera, dot password and read the passwords or whatever, or usernames.

25:57 This is the type of stuff that falls in a broken access control or just no login checks at all.

26:01 I literally did this yesterday, Michael, because someone was like, hey, go get this file from there.

26:05 And then I go in the folder and it's not there.

26:07 Like the link wasn't correct.

26:08 So I just went through the web directory with that.

26:11 Like, but they wanted to send me the file.

26:14 So just to be clear, like, like they sent me and told me to go get it.

26:18 I wasn't stealing anything.

26:19 And I didn't end up eventually finding it either.

26:22 So then they had to send me another link that was correct.

26:25 But I was like, oh, I'll just like not waste their time.

26:27 I'll just go look for myself because it's that easy.

26:30 Incredible.

26:30 It's like, I'm going to use their tools, but not in a way that they necessarily expected.

26:33 Well, I mean, they could have just sent me the right link.

26:35 Exactly.

26:36 They should have just sent you the right link.

26:37 Yeah, I've I have some I cannot recount them here, but my dad, he I've got to let you help take care of him and stuff now these days.

26:45 And he can't do a lot of his own paperwork and things.

26:48 And so I've had to do some crazy stuff to to get access to or to help him fill out something that, yeah, it's insane.

26:55 OK, let's go on to number two.

26:57 This is just setting the wrong configurations, not following the hardening guide, not doing patching.

27:02 And this is so easy for a malicious actor to find because there's scanners.

27:06 I joke whenever anyone's like, oh, like, you know, we don't want to do a pen test because it might break our thing.

27:12 And I'm like, well, you're actually having a penetration test done all the time.

27:15 If you're on the Internet, you just aren't receiving the report.

27:18 That is absolutely so true and so disturbing.

27:21 If if if you're out there listening and you have something on the Internet, API website, whatever, and you have not just tailed the log of it and just see slash WP slash admin slash this slash that just coming at it left and right.

27:34 You're like, what is going on?

27:36 Because it doesn't show up in your analytics.

27:37 I actually had someone find something on my website that I was surprised about because I had a user from my blog from long ago and they could see my user and then it gave my

27:49 email address and it was actually a personal email address that I because I had a backup admin account and I used my personal email and he is like, did you want that on there?

27:57 I'm like, no, only my mom and my dad email me there because I'm bad.

28:01 And if my parents write me, I should write back.

28:06 Right.

28:07 And so I'm like, oh, that's the personal email that I'm supposed to answer on time.

28:12 He wrote me and helped me like turn off that setting that I had no idea about despite having multiple security plugins and having run an audit.

28:20 I'd miss that.

28:20 This one could evolve for like Python people like Django with debug equals true.

28:24 Now this is the most, probably most used misconfiguration example for Django apps out there.

28:30 You're like, well, of course, Michael, I know you don't set debug true in production.

28:34 You do it all the time.

28:35 People do it.

28:35 You're right.

28:36 And then two, there's like 10 other settings that are, should be in production that are not in production in Django.

28:42 Like HSTS.

28:44 Yes, please.

28:45 And a bunch of other, you know, do not allow me to put it, be put into an iframe and all sorts of other things, content security policies and security headers.

28:53 Yes, exactly.

28:54 This is what happened with Claude Code.

28:56 And this is how they, they essentially allowed debug and production, like by not suppressing their map file and then also not having it as part of their git ignore, which is essentially having debug mode in prod.

29:09 And that's how they lost their source code.

29:11 Just to be clear, I'm not shaming the developer that did that.

29:14 They probably didn't have a checklist for that person.

29:17 They probably didn't have anything that scanned to tell them that those settings were incorrect.

29:21 Right.

29:22 They probably don't even have a policy that clarifies.

29:25 They're like, oh, they should just know not to do that.

29:27 Right.

29:27 And then they were rushed.

29:28 Probably they're in a hurry.

29:30 And then.

29:31 Yeah.

29:31 They're shipping three or four times a day, which I appreciate, but at the same time.

29:34 Yeah.

29:34 And then now very bad things are happening.

29:38 It is going to be the most audited code that has ever happened.

29:41 Michael.

29:41 I've seen so many videos parsing that stuff apart.

29:45 It's wild.

29:45 Yeah.

29:46 For people who don't know, I'm sure people heard clog code got leaked, but basically the map file in JavaScript says, here's the minified version.

29:54 But if you want to show the full source version for helpful debugging, here it is.

29:59 And there's how you get to it.

30:00 And that apparently got shipped.

30:01 And people were just like, you know what?

30:02 Why don't we find out what those files are actually?

30:04 And it was two security misconfigurations because one of them would have stopped it from going and the other one would have like not had it been in the package in the first place.

30:14 Right.

30:14 And it's number two.

30:16 And it happens even to the really, really, really, really, really high profile, you know, high security assurance requiring places.

30:23 I have a third one on this list that I think will really surprise people like for real.

30:29 So imagine this.

30:30 I've got a self-hosted app and I'm going to run it on a Docker in Docker.

30:35 You know, it could be Kubernetes.

30:36 It could be whatever.

30:37 But I'm going to run it in Docker on my server.

30:40 And it has both a web interface and a database.

30:44 And the database is running on the default port and so on.

30:47 So that's all fine.

30:48 But it's in Docker and everything's locked down.

30:51 And so what you could do is you can use this thing called UFW, uncomplicated firewall on Linux.

30:55 Turn that on and say block or don't block.

30:58 Only allow my web port.

31:00 And in your Docker compose file, you often or even just Docker statements, you see map, say Postgres, port 5432 to 5432.

31:09 Guess what?

31:09 That's actually open on the Internet, probably with the default password on that database.

31:13 Because if you look at the Docker docs and you go to the bottom, it says uncomplicated firewalls, a friend that ships with Debian and Ubuntu unless you manage firewall.

31:21 Docker and UFW use firewall rules in ways that make them incompatible.

31:26 When you publish the container ports on Docker traffic, it gets diverted before that.

31:30 So guess what?

31:30 That's open on the Internet.

31:32 Holy smokes.

31:33 Is that it's so common to just see this port on the container map to this port on the server.

31:39 And if you're thinking that UFW on your firewall is going to save you, it's actually just open on the Internet.

31:45 Like I didn't realize that.

31:46 And so, for example, what do you do?

31:47 Well, you say localhost colon my port on the server.

31:52 So you're shipping, you're only listening locally.

31:54 You don't have, or you just don't do that.

31:55 But that's a really subtle and sneaky one that people should be aware of.

32:00 The thing is no one can memorize all of this.

32:02 And so what is the answer, Michael?

32:04 Checklists?

32:05 Checklists are good.

32:06 Scanners?

32:06 Scanners are good.

32:07 Honestly, I think the modern top tier AI agentic tools are really good.

32:13 They find a surprising amount of these things.

32:16 They find them if you ask them to find them, or they make it part of the code that they give you when you just ask for it.

32:23 Because people just say, I want the app.

32:24 They don't say, I want a secure app necessarily.

32:26 And well, it's more efficient to not worry about the security.

32:29 We'll save you some tokens.

32:30 Even if you just say, I want a secure app.

32:33 So I gave a conference talk two weeks ago at RSA called Insecure Vibes.

32:37 In the demo that I recorded in advance that was not part of the slides when I gave my live presentation, but it's on my YouTube.

32:44 I just asked Claude, I'm like, can you make a login function that's for an insulin pump?

32:49 So this is a medical device that needs to be really secure.

32:52 And it does it.

32:53 And then after I'm like, analyze it for vulnerabilities.

32:55 And multiple AIs found critical vulnerabilities in it.

32:59 So I asked for it to be secure.

33:00 So you can't just say, I want to be secure.

33:02 You have to say, and this is what secure means.

33:05 I do think you can find a lot if you use the tools in the right way.

33:08 But like you said, you've got to ask.

33:09 And it's a proper step.

33:11 Software supply chain failures.

33:13 Number three.

33:14 This is the expansion.

33:15 So this used to be vulnerable and outdated components, which is part of your software supply chain.

33:20 But Michael, I'm sure that I've told you this before, but for people that haven't heard me, blah, blah, blah, about it.

33:25 Every single thing that you use to create and maintain your software is part of your supply chain.

33:31 So that includes your browser, the plugins in the browser, the sandbox you created, your CI, all the settings in the CI, where you're getting your libraries and packages from, how you're getting them.

33:43 So do they maintain integrity across the wire when you get them?

33:47 Could it be that you got something else?

33:49 Like every single thing that you're using to maintain and create is part of the supply chain.

33:56 And so we need to protect the whole thing.

33:59 And like we were saying earlier, developers themselves are becoming targets of malicious actors.

34:04 We need to find ways to defend the developer themselves, protect them, make them safer doing their jobs, right?

34:12 And help them find ways to secure the whole supply chain that's not too painful because they still need flexibility in order to be creative.

34:20 So some Python things that you can do concretely here is pin your dependencies.

34:24 You can use pip compile or you can use uv lock files.

34:28 There's all sorts of things that are possible there.

34:31 And then you can also, I think the other side that we haven't mentioned, Tanya, is like known vulnerabilities in packages.

34:38 I think a lot of people, I would say over 95% of the people that install libraries from PyPI, they don't even check whether or not there's a vulnerability in that package before they install it.

34:50 I would like to see.

34:51 So in 2022, the first company announced this idea of reachability.

34:56 So let's say you want to do math.

34:58 So you install a math library.

34:59 We don't actually want to do all of math, right?

35:02 We probably just want to do calculus, but maybe the vulnerabilities in the statistics function, right?

35:07 And so when your code calls all the calculus functions, you're like, woo, derivatives.

35:14 You're not actually, there's no reachable path from your code to the vulnerability.

35:19 Most of the time, that means it's not exploitable, except for if it's log4j, then you're just screwed.

35:24 Just to be clear, you're just in trouble, right?

35:26 But for most things, like 99.9% of the time, then you're fine if there's no reachability.

35:31 And so software composition analysis tools, sometimes called supply chain security tools, when the marketing teams got a little out of hand.

35:39 I feel like if you do one of the 19 attack surfaces within the supply chain that you don't get to call yourself a supply chain tool, but I digress.

35:47 I have strong feels.

36:17 That's so overwhelming.

36:18 I'm just not even going to look at it.

36:20 I have a known vulnerability in one of the packages that I am shipping to production.

36:25 I think it's a PDF package or something like that.

36:28 I can't remember.

36:29 And I scan all my builds with pip audit and it will fail the build.

36:33 So I have to ignore it because it is a vulnerability when you call a path that I don't call and you're running on Windows.

36:40 And I'm trying to deploy it to Docker.

36:42 And I'm not calling that path.

36:44 I'm like, I understand it's a problem that could be an issue under some circumstances, but it doesn't apply here.

36:50 And I just need to use it.

36:51 And it's a Windows problem on my Docker Linux.

36:54 I don't really care right now.

36:56 I mean, it's fine.

36:56 Until they fix it, I'll be okay.

36:58 Well, and especially if you know what the problem is and you're not going to suddenly switch to Windows, why would you do that?

37:05 So the tools are maturing, but they're not perfect.

37:09 And lots of them are going at different speeds.

37:13 We'll just say that.

37:13 So I look forward to the day where there's reachability done on all of those things.

37:20 This portion of Talk Python To Me is brought to you by us.

37:23 I want to tell you about a course I put together that I'm really proud of, Agentic AI Programming for Python Developers.

37:31 I know a lot of you have tried AI coding tools and come away thinking, well, this is more hassle than it's worth.

37:37 And honestly, all the vibe coding hype isn't helping.

37:40 It's a smokescreen that hides what these tools can actually do.

37:44 This course is about agentic engineering, applying real software engineering practices with AI that understands your entire code base, runs your tests, and builds complete features under your direction.

37:57 I've used these techniques to ship real production code across Talk Python, Python Bytes, and completely new projects.

38:04 I migrated an entire CSS framework on a production site with thousands of lines of HTML in a few hours.

38:10 Twice.

38:11 I shipped a new search feature with caching and async in under an hour.

38:15 I built a complete CLI tool for Talk Python from scratch, tested, documented, and published to PyPI in an afternoon.

38:24 Real projects, real production code, both Greenfield and legacy.

38:29 No toy demos, no fluff.

38:31 I'll show you the guardrails, the planning techniques, and the workflows that turn AI into a genuine engineering partner.

38:37 Check it out at talkpython.fm/agentic dash engineering.

38:41 That's talkpython.fm/agentic dash engineering.

38:45 The link is in your podcast player's show notes.

38:47 One of the other things I wanted to mention is like, so you said pin dependencies.

38:52 And so I teach this and then inevitably every time someone's like, well, if I pin dependencies forever, then I just have all these really old dependencies.

38:59 That's not what Michael means.

39:01 He means you do development, you update your dependencies to a version.

39:05 Like ideally you're like LTE, you're like latest, you know, stable version of whatever the thing is.

39:10 Because you're trying to keep like definitely as supported version, recent, you're not picking terrible things where, you know, it hasn't been updated in two years, or there's one maintainer and they happen to live in Russia and work for the Russian government, right?

39:23 So you're picking like decent ones.

39:25 You're updating it in dev.

39:26 You're like, okay, this is the one.

39:27 Then you pin it.

39:28 So as it goes up to different environments, you don't get a surprise update and it changes.

39:33 And then there's something different in prod than what you tested in UAT and approved with the security tools.

39:39 That's what, that's what.

39:40 And a hundred percent.

39:41 Because it gets misinterpreted.

39:43 Yeah.

39:43 And another thing to do that you can do real simple is like with some of the tools, like with uv, you can say, I have pin dependencies, update them to the current ones

39:52 with a very important caveat that you can say that are older than a week or older than a day or something.

39:58 But because, you know, the really big example here is LLM, light LLM, just this, was that just this week or was that last week?

40:05 I can't keep it.

40:06 It was very recent.

40:07 Yeah.

40:07 This thing has a dependency that itself became, like you talked about, the developer got taken over, I believe, and a virus got put in and it was only out for like half an hour or something, but it took over, it's so popular.

40:20 It got, took it, took it over like 50,000 machines because it gets downloaded millions of times a day.

40:26 Automatically.

40:26 If you say, give me the latest, he's like, obviously waiting a week is not hardcore security, but at the same time, so many of these popular issues that people take, they only last briefly, right?

40:36 For a few moments.

40:37 And then somebody's like, oh my gosh, why is this thing using 100% CPU?

40:41 You know what I mean?

40:42 And here's the thing, Michael, is that not all of those 50,000 got the memo that this happened and they're still vulnerable in prod and they could be from them.

40:49 Yeah.

40:49 It could be for a long time.

40:50 Yes, I agree.

40:51 Like update, but to, you know, one that's three days old or one week old, it's weird.

40:56 So this advice has drastically changed over the past six months.

41:00 The best practice used to be auto update to latest version, period.

41:03 That used to be the advice and that's no longer the advice.

41:06 And it's kind of heartbreaking, especially if you use npm.

41:09 npm is just like under siege.

41:11 It is.

41:12 Yeah.

41:12 High PI is as well, but it looks over at npm as thankful for its situation.

41:17 Number four.

41:18 Or I got to keep cruising here.

41:21 For cryptographic failures.

41:22 Cryptographic failures.

41:23 Yeah.

41:24 Not encrypting.

41:25 Encrypting using something really old.

41:28 You start off encrypted and then briefly you're not encrypted and then you're encrypted again.

41:32 You don't encrypt it when you're supposed to.

41:34 Also in this realm, one way hashing, not just reversible encryption, right?

41:41 It would probably fall in here.

41:42 Encrypting user passwords and storing them in the database along with the key.

41:47 Ideally, we would hash and we would salt and then hash user passwords.

41:52 That would be the best.

41:53 If you really, really, really, really are intense, you could pepper it too.

41:56 And no, I did not make that up.

41:58 That is a mathematical nerd joke, not an app sec joke.

42:01 But a salt is unique per user and the salt itself isn't really a secret.

42:06 Where a pepper is unique per system or per organization.

42:09 And it is a secret.

42:11 Right.

42:11 Like a secret key that you set and then it gets factored in there.

42:14 That's cool.

42:15 Yeah.

42:15 Yeah.

42:15 Also, maybe choose more modern hashing algorithms, right?

42:19 Obviously not MD5, but maybe something memory hard like Argon maybe.

42:23 I don't know.

42:23 Yes.

42:23 Argon 2.

42:24 That would be better for sure.

42:27 And this is something where if you're going to do it, it's very easy to look up what you're supposed to do on the internet.

42:33 This is something where you can ask the AI, like, are you using a good algorithm?

42:38 Are you doing this?

42:39 Like, make sure it's secure.

42:40 And then it's good as long as it does it.

42:43 Because one suggestion, if you are going to VibeCode and not do the 400 other things we'll talk about later when we talk about my prompt library, but ask it to list its security assumptions.

42:54 So whatever it is you prompt, you give it to make a thing.

42:56 You're like, make this, then do that, blah, blah, blah.

42:59 Please list all your security assumptions.

43:01 And it'll be like, oh, yeah, but obviously like you wouldn't do like authentication like that because that's terrible.

43:06 And like in production, you would do this other thing and you're like, oh, yeah, because it'll assume that you're going to change a bunch of things later that it doesn't tell you unless you ask it to tell you its assumptions.

43:18 Yeah.

43:18 We'll use no password here, but when you ship it, you're going to add that, right?

43:21 Like, no, I wasn't going to, but now I will.

43:23 All right.

43:24 I think one of the best known ones has got to be little Bobby tables and friends.

43:29 Number five, injection.

43:31 Yes.

43:32 So injection, tricking an application in, like you put your code, the malicious actor's code into a place where it should be data, but you've tricked it into thinking it's its code.

43:43 And then either it executes it or it interprets it.

43:45 Like if there's an interpreter, there's a compiler, there's the potential for injection.

43:49 And it, yeah, we don't want to mix data in with commands.

43:54 We don't want to mix data in with anything that's going to be executed or interpreted.

43:58 And we do it a lot, Michael.

43:59 I know we make bad choices, don't we?

44:01 We make bad choices.

44:02 So obviously SQL injection is the number one in this world for sure.

44:08 And still it's popular.

44:10 Still people don't know.

44:11 It's still tricky.

44:12 I mean, we have certainly parametrized queries and ORMs and stuff that should be helping us or does help us if we choose to use them with this.

44:19 However, I think other ones should just give them a quick shout out.

44:23 Like for example, if you're accepting JSON and converting it to a dictionary in Python, you can do MongoDB injection.

44:31 Like your password, you know, the Bobby tables one is like quote, semicolon drop table that, you know, like that's what that looks like in T-School.

44:39 But in MongoDB, you can do queries that are dictionaries.

44:43 That's like kind of how you do your filtering.

44:44 So if you take something that would be a password in a JSON document, the password could be curly brace greater than, you know, one equals one.

44:54 Like a really complicated JSON dictionary that is actually the query that is equivalent.

44:58 So you got to be super careful there as well.

44:59 And that's really tricky to do that.

45:02 And then also like the pickles and like serialization, deserialization.

45:06 There's a lot to this, not just SQL injection.

45:09 It's a lot about input validation.

45:11 So using a parametrized query, so store procedures, prepared statements, whatever you want to call them.

45:17 What that does is says this is data only treated as data.

45:21 And then the database can do that.

45:24 But if we, on top of that, do input validation.

45:27 So like we're getting the thing that looks correct and we're rejecting, we're not trying to fix it.

45:33 We're just rejecting everything that looks not correct.

45:36 And then if we have to accept any special characters, we escape them or sanitize them out.

45:41 I prefer escaping.

45:42 I think it's weird to remove stuff.

45:43 That's my data.

45:44 I probably want it.

45:45 So like if you have to accept single quotes because you know you're going to have users named O'Malley, let's say.

45:50 Right.

45:50 So we accept the letters.

45:52 We accept the numbers.

45:53 We accept a single quote and some dashes, even though those are dangerous.